Lynn Elwood, VP of Cloud and Services Solutions at OpenText, presented on managing information in a cloud world at today’s AIIM chapter meeting in Toronto. This is of particular interest to Canadians, since most of the cloud service offerings that we see are in the US, and many companies are not comfortable with keeping their private data in a jurisdiction where it can be somewhat easily exposed to foreign government and intelligence agencies.

She used a building analogy to talk about cloud services:

- Infrastructure as a service (IaaS) is like a piece of serviced land on which you need to build your own building and worry about your connections to services. If your water or electricity is off, you likely need to debug the problem yourself although if you find that the problem is with the underlying services, you can go back to the service provider.

- Platform as a service (PaaS) is like a mobile home park, where you are responsible for your own dwelling but not for the services, and there are shared services used by all residents.

- Software as a service (SaaS) is like a condo building, where you own your own part of it, but it’s within a shared environment. SaaS by Gartner’s definition is multi-tenant, and that’s the analogy: you are at the whim, to a certain extent, of the building management in terms of service availability, but at a greatly reduced cost.

- Dedicated, hosted or managed is like a private house on serviced land, where everything in the house is up to you to maintain. In this set of analogies, not sure that there is a lot of distinction between this and IaaS.

- On-premises is like a cottage, where you probably need to deal with a lot of the services yourself, such as water and septic systems. You can bring in someone to help, but it’s ultimately all your responsibility.

- Hybrid is a combo of things — cloud to cloud, cloud to on-premise — such as owning a condo and driving to a cottage, where you have different levels of service at each location but they share information.

- Managed services is like having a property manager, although it can be cloud or on-premise, to augment your own efforts (or that of your staff).

Regardless of the platform, anything that touches the cloud is going to have a security consideration as well as performance/up-time SLAs if you want to consider it as part of your core business. From my experience, on-premise solutions can be just as insecure and unstable as any cloud offering, so good to know what you’re comparing with when you are looking at cloud versus on-premise.

Most organziations require that their cloud provider have some level of certification: of the facility (data centre), platform (infrastructure) and service (application). Elwood talked about the cloud standards that impact these, including ISO 27001, and SOC 1, 2 and 3.

A big concern is around applications in the cloud, namely SaaS such as Box or Salesforce. Although IT will be focused on whether the security of that application can be breached, business and information managers need to be concerned about what type of data is being stored in those applications and whether it potentially violates any privacy regulations. Take a good look at those SaaS EULAs — Elwood took us through some Apple and Google examples — and have your lawyers look at them as well if you’re deploying these solutions within the enterprise. You also need to look at data residency requirements (as I mentioned at the start): where the data resides, the sovereignty of the hosting company, the routing between you and the data even if the data resides in your own country, and the backup policies of the hosting company. The US Patriot Act allows the US government to access any data that passes through, is stored in, or is hosted by a company that is domiciled in the US; other countries are also adding similar laws. Although a company may have a data centre in your country, if they’re a US company, they probably have a default to store/process/backup in the US: check our the Microsoft hosting and data processing agreement, for example, which specifies that your data will be hosted and/or processed in the US unless you explicitly request otherwise. There’s an additional issue that even if your data has the appropriate residency, if an employee is travelling to a restricted country and accesses the data remotely, you may be violating privacy regulations; not all applications have the ability to filter otherwise authenticated access based on IP address. If you add this to the ability of foreign governments to demand device passwords in order to enter a country, the information accessible via an employee’s computer — not just the information stored it — is at risk for exposure.

Elwood showed a map of the information governance laws and regulations around the world, and it’s a horrifying mix of acronyms for data protection and privacy rules, regulated records retention, eDiscovery requirements, information integrity and authenticity, and reporting obligations. There’s a new EU regulation — the General Data Protection Regulation (GDPR) — that is going to be a game-changer, harmonizing laws across all 28 member nations and applying to any data collected about an EU citizen even outside the EU. The GDPR includes increased consent standards, stronger individual data rights, stronger breach notification, increased governance obligation, stronger recordkeeping requirements, and data transfer constraints. Interestingly, Canada is recognized as one of the countries that is deemed to have “adequate protection” for data transfer, along with Andorra, Argentina, the Faroe Islands, the Channel Islands (Guernsey and Jersey), Isle of Man, Israel, New Zealand, Switzerland and Uruguay. In my opinion, many companies aren’t even aware of the GDPR, much less complying with it, and this is going to be a big wake-up call. Your compliance teams need to be aware of the global landscape as it impacts your data usage and applications, whether in the cloud or on premise; companies can receive huge fines (up to 4% of annual revenue) for violating GDPR whether they are the “owner” of the data or just a data processor/host.

OpenText has a lot of GDPR information on their website that is not specific to their products if you want to read more.

There are a lot of benefits to cloud when it comes to information management, and a lot of things to consider: agility to grow and change quickly; a services approach that requires partnering with the service provider; mobility capabilities offered by cloud platforms that may not be available for on premise; and analytics offered by cloud vendors within and across applications.

She finished up with a discussion on the top areas of concerns for the attendees: security, regulations, GDPR, data sovereignty, consumer applications, and others. Great discussion amongst the attendees, many of whom work in the Canadian financial services industry: as expected, the biggest concerns are about data residency and sovereignty. GDPR is seen as having the potential to level the regulatory playing field by making everyone comply; once the data centres and service providers start to comply, it will be much easier for most organizations to outsource that piece of their compliance by moving to cloud services. I think that cloud service providers are already doing a better job at security and availability than most on-premise systems, so once they crack the data residency and sovereignty problem there is little reason to have a private data centre. IT’s concern has mostly been around security and availability, but now is the time for information and compliance managers to get involved to ensure that privacy regulations are supported by these platforms.

There are Canadian companies using cloud services, even the big banks and government, although I am guessing that it’s for peripheral rather than core services. Although some are doing this “accidentally” as the only way to share information with external participants, it’s likely time for many companies to revisit their information management strategies to see if they can be more inclusive of property vetted cloud solutions.

We did get a very brief review of OpenText and their offerings at the end, including their software solutions and their EIM cloud offerings under the OpenText Cloud banner. They are holding their Enterprise World user conference in Toronto this July, which is the first (but likely not the last) big software company to see the benefits of a non-US North American conference location.

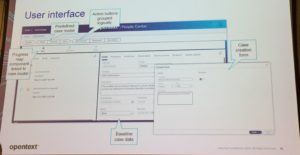

Similar to the approach of other vendors, OpenText equates “case management” with “vertical application development” to a certain extent, and getting to case handling quickly needs a blueprint to quick-start solution development. To that end, they provide an accelerator as part of Process Suite that includes a pre-defined case model and entities to provide a starting point for developing a case management application, particularly incident management or service requests. Essentially, it’s a sample app/template, albeit a well-structured one that can easily be modified for actual solutions; they have no illusions that this is going to be an out-of-the-box solution for anyone, but rather a guide for people creating new case management applications so that they don’t need to start from scratch.

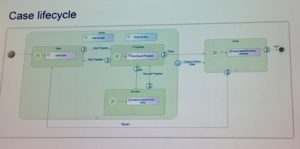

Similar to the approach of other vendors, OpenText equates “case management” with “vertical application development” to a certain extent, and getting to case handling quickly needs a blueprint to quick-start solution development. To that end, they provide an accelerator as part of Process Suite that includes a pre-defined case model and entities to provide a starting point for developing a case management application, particularly incident management or service requests. Essentially, it’s a sample app/template, albeit a well-structured one that can easily be modified for actual solutions; they have no illusions that this is going to be an out-of-the-box solution for anyone, but rather a guide for people creating new case management applications so that they don’t need to start from scratch. If you refer back to the more complete description of AppWorks Low Code that I gave in the previous post, they have defined entities, forms, layouts and a case lifecycle that fit a wide variety of request-style case management applications.

If you refer back to the more complete description of AppWorks Low Code that I gave in the previous post, they have defined entities, forms, layouts and a case lifecycle that fit a wide variety of request-style case management applications.