Except for a hiatus in 2017, I’ve been at every bpmNEXT since its inception in 2013, created and hosted by Bruce Silver and Nathaniel Palmer as a showcase for new ideas in BPM and related technologies. This is not a conference for (potential) customers, but a place for vendors, researchers and analysts to come together to exchange ideas about what’s happening in the marketplace and the technology labs. Most of the agenda is made up of 30-minute demo sessions with a few panels and keynotes sprinkled in.

Nathaniel Palmer started our first day with a look forward at the next five years of BPM by considering the five-year span from 2015 to 2020 and how his predictions are playing out from his first predictions keynote. In 2015, he talked about intelligent automation; today, we’re seeing robots and rules-based automation as an integral part of how business is done. This is pretty crucial, because the average number of systems required to present a complete view of a customer is 13.2 (!), 8 of which are external, with 80% of firms stating that they use more than 10 systems to get that a 360 degree view. He talks about the need for an intelligent automation platform that includes robotic automation, AI and machine learning, decision management, and process management, communicating with events and data via an event gateway/bus. He believes that the role of a BPMS is also to provide the framework for development and to build the user interface – an idea that I’ll be debating somewhat in my keynote tomorrow – but sees always-on, context-driven devices such as smart speakers as the future of how we interact with systems rather than traditional computers and smartphones. That means that conversational interaction will take over from worklist metaphors for common processes for consumers and employees; my interpretation of this is that the task-focused activities are those that will be automated, leaving the more fluid activities for people to deal with.

A consideration of this changing nature of automation is how to model this. Our traditional workflows have a pre-defined path, whereas intelligent automation (with more of a case management/ad hoc paradigm) has more adaptable processes driven by rules and business context. It’s more like using Waze for dynamically-adjusted driving directions rather than a pre-conceived idea of what route to follow. The danger with this – in my experience with Waze and adaptable business processes – is that you could end up on a route that is not generally followed, messes up the people who have to get involved along the route, and definitely isn’t repeatable or scalable: better for that specific instance and its participants, but possibly detrimental to others. The potential gain is, of course, that the process as a whole is more resilient because it responds to events by determining an action that will reach the goal, and you may just find a new and better way of doing something. Respond to events, definitely, but at some point take a step back and consider the impact of the new pathways that you’re carving out.

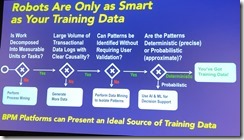

He spoke about problems with AI/ML and training data biases – robots are only as smart as your training data – and highlighted that BPM platforms are a great source of training data via process mining.and analysis.

He spoke about problems with AI/ML and training data biases – robots are only as smart as your training data – and highlighted that BPM platforms are a great source of training data via process mining.and analysis.

Insightful as always, and it will be interesting to see these themes play out in the demos over the next three days.

Francois Bonnet from ITESOFT presented on customer interactions and automation, and the use of BPMN-driven robots to guide customer experience. In a first for bpmNEXT, the demo included an actual physical human-shaped robot (which was 3D-printed from an open source project) that can do voice recognition, text to speech, video capture, movement tracking and facial recognition. The robot’s actions were driven by a BPMN process model, with activities such as searching for humans, recognizing faces, speaking phrases, processing input and making branching decisions. The process model was shown simultaneously, with the execution path updated in real time as it moved through the process, with robot actions shown as service activities. The scenario was the robot interacting with a customer in a mobile phone shop, recognizing the customer or training a new facial recognition, asking what service is required, then stepping through acquiring a new phone and plan. He walked through how the BPMN model was used, with both synchronous and asynchronous services for controlling the robot and invoking functions such as classifier training, and human activities for interacting with the customer. Interesting use of BPMN as a driver for real robot actions, showing integration of recognition, RPA, AI, image capture and business services such as customer enrolment and customer ID validation.

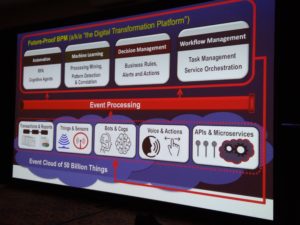

Francois Bonnet from ITESOFT presented on customer interactions and automation, and the use of BPMN-driven robots to guide customer experience. In a first for bpmNEXT, the demo included an actual physical human-shaped robot (which was 3D-printed from an open source project) that can do voice recognition, text to speech, video capture, movement tracking and facial recognition. The robot’s actions were driven by a BPMN process model, with activities such as searching for humans, recognizing faces, speaking phrases, processing input and making branching decisions. The process model was shown simultaneously, with the execution path updated in real time as it moved through the process, with robot actions shown as service activities. The scenario was the robot interacting with a customer in a mobile phone shop, recognizing the customer or training a new facial recognition, asking what service is required, then stepping through acquiring a new phone and plan. He walked through how the BPMN model was used, with both synchronous and asynchronous services for controlling the robot and invoking functions such as classifier training, and human activities for interacting with the customer. Interesting use of BPMN as a driver for real robot actions, showing integration of recognition, RPA, AI, image capture and business services such as customer enrolment and customer ID validation. His Future-Proof BPM architecture — what others are calling a digital transformation platform — brings together a variety of capabilities that can be provided by many vendors or other organizations, and fed by events. In fact, the core capabilities (automation, machine learning, decision management, workflow management) also generate events that feed back into the data flooding into these processes. BPM platforms have the ability to become the orchestrating platforms for this, which is possibly why many of the BPMS vendors are rebranding as low-code application development environments, but be aware of fundamental differences in the underlying architecture: do they support modularity and microservices, or are they just lifting and shifting to monolithic containers in the cloud?

His Future-Proof BPM architecture — what others are calling a digital transformation platform — brings together a variety of capabilities that can be provided by many vendors or other organizations, and fed by events. In fact, the core capabilities (automation, machine learning, decision management, workflow management) also generate events that feed back into the data flooding into these processes. BPM platforms have the ability to become the orchestrating platforms for this, which is possibly why many of the BPMS vendors are rebranding as low-code application development environments, but be aware of fundamental differences in the underlying architecture: do they support modularity and microservices, or are they just lifting and shifting to monolithic containers in the cloud?