On the home stretch of the Wednesday agenda, with the last session of the four last demos for the day.

BPM in the Cloud: Changing the Playing Field – Eric Herness, IBM

IBM Bluemix process-related cloud services, including cognitive services leveraging Watson. Claims process demo that starts by uploading an image of a vehicle and passing to Watson image recognition for visual classification; returned values show confidence in vehicle classification, such as “car”, and sends any results over 90% to the Alchemy taxonomy service to align those — in the demo, Watson returned “cars” and “sedan” with more than 90% confidence, and the taxonomy service determined that sedan is a subset of cars. This allows routing of the claim to the correct process for the type of vehicle. If Watson has not been trained for the specific type of vehicle, the image classification won’t be determined with a sufficient level of confidence, and it will be passed to a work queue for manual classification. Unrecognized images can be used to add to classifier either as example of an existing classification or as a new classification. Predictive models based on Spark machine learning and analytics of past cases create predictions of whether claim should be approved, and the degree of confidence in that decision; at some point, as this confidence increases, some of the claims could be approved automatically. Good examples of how to incorporate cognitive computing to make business processes smarter, using cognitive services that could be called from any BPM system, or any other app that can call REST services.

IBM Bluemix process-related cloud services, including cognitive services leveraging Watson. Claims process demo that starts by uploading an image of a vehicle and passing to Watson image recognition for visual classification; returned values show confidence in vehicle classification, such as “car”, and sends any results over 90% to the Alchemy taxonomy service to align those — in the demo, Watson returned “cars” and “sedan” with more than 90% confidence, and the taxonomy service determined that sedan is a subset of cars. This allows routing of the claim to the correct process for the type of vehicle. If Watson has not been trained for the specific type of vehicle, the image classification won’t be determined with a sufficient level of confidence, and it will be passed to a work queue for manual classification. Unrecognized images can be used to add to classifier either as example of an existing classification or as a new classification. Predictive models based on Spark machine learning and analytics of past cases create predictions of whether claim should be approved, and the degree of confidence in that decision; at some point, as this confidence increases, some of the claims could be approved automatically. Good examples of how to incorporate cognitive computing to make business processes smarter, using cognitive services that could be called from any BPM system, or any other app that can call REST services.

Model, Generate, Compile in the Cloud and Deploy Ready-To-Use Mobile Process Apps – Rafael Bortolini and Leonardo Luzzatto, CRYO/Orquestra

Demo of Orquestra BPMS implementation for Rio de Janeiro’s municipal processes, e.g., business license requests. From a standard worklist style of process management, generate a process app for a mobile platform: specify app name and logo, select app functionality based on templates, then preview it and compile for iOS or Android. The .ipa or .apk files are generated ready for uploading to the Apple or Google app stores, although that upload can’t be automated. Full functionality to allow mobile user to sign up or login, then access the functionality defined for the app to request a business license. Although an app is generated, the data entry forms are responsive HTML5 to be identical to the desktop version. Very quick implementation of a mobile app from an existing process application without having to learn the Orquestra APIs or even do any real mobile development, but it can also produce the source code in case this is just wanted as a quick starting point for a mobile development project.

Dynamic Validation of Integrated BPMN, CMMN and DMN – Denis Gagné, Trisotech

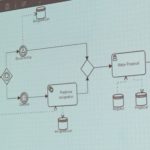

Kommunicator tool based on their animation technology that animates models, which allows tracing the animation directly from a case step in the BPMN model to the CMMN model, or from a decision step to the DMN model. Also links to the semantic layer, such as the Sparx SOA architecture model or other enterprise architecture reference models. This allows manually stepping through an entire business model in order to learn and communicate the procedures, and to validate the dynamic behavior of the model against the business case. Stepping through a CMMN model requires selecting the ad hoc tasks as the case worker would in order to step through the tasks and see the results; there are many different flow patterns that can emerge depending on the tasks selected and the order of selection, and stages will appear as being eligible to close only when the required tasks have been completed. Stepping through a DMN model allows selecting the input parameters in a decision table and running the decision to see the behavior. Their underlying semantic graph shows the interconnectivity of all of the models, as well as goals and other business information.

Kommunicator tool based on their animation technology that animates models, which allows tracing the animation directly from a case step in the BPMN model to the CMMN model, or from a decision step to the DMN model. Also links to the semantic layer, such as the Sparx SOA architecture model or other enterprise architecture reference models. This allows manually stepping through an entire business model in order to learn and communicate the procedures, and to validate the dynamic behavior of the model against the business case. Stepping through a CMMN model requires selecting the ad hoc tasks as the case worker would in order to step through the tasks and see the results; there are many different flow patterns that can emerge depending on the tasks selected and the order of selection, and stages will appear as being eligible to close only when the required tasks have been completed. Stepping through a DMN model allows selecting the input parameters in a decision table and running the decision to see the behavior. Their underlying semantic graph shows the interconnectivity of all of the models, as well as goals and other business information.

Simplified CMMN – Lloyd Dugan, BPM.com

Last up is not a demo (by design), but a proposal for a simplified version of CMMN, starting with a discussion of BPMN’s limitations in case management modeling: primarily that BPMN treats activities but not events as first-class citizens, making it difficult to model event-driven cases. This creates challenges for event subprocesses, event-driven process flow and ad hoc subprocesses, which rely on “exotic” and rarely used BPMN structures and events that many BPMN vendors don’t even support. Moving a business case – such as an insurance claim – to a CMMN model makes it much clearer and easier to model; the more unstructured that the situation is, the harder it is to capture in BPMN, and the easier it is to capture in CMMN. Proposal for simplifying CMMN for use by business analysts include removing PlanFragment and removing all notational attributes (AutoComplete, Manual Activitation, Required, Repetition) that are really execution-oriented logic. This leaves the core set of elements plus the related decorators. I’m not enough of a CMMN expert to know if this makes complete sense, but it seems similar in nature to the subsets of BPMN commonly used by business analysts rather than the full palette.

Last up is not a demo (by design), but a proposal for a simplified version of CMMN, starting with a discussion of BPMN’s limitations in case management modeling: primarily that BPMN treats activities but not events as first-class citizens, making it difficult to model event-driven cases. This creates challenges for event subprocesses, event-driven process flow and ad hoc subprocesses, which rely on “exotic” and rarely used BPMN structures and events that many BPMN vendors don’t even support. Moving a business case – such as an insurance claim – to a CMMN model makes it much clearer and easier to model; the more unstructured that the situation is, the harder it is to capture in BPMN, and the easier it is to capture in CMMN. Proposal for simplifying CMMN for use by business analysts include removing PlanFragment and removing all notational attributes (AutoComplete, Manual Activitation, Required, Repetition) that are really execution-oriented logic. This leaves the core set of elements plus the related decorators. I’m not enough of a CMMN expert to know if this makes complete sense, but it seems similar in nature to the subsets of BPMN commonly used by business analysts rather than the full palette.

Finishing bpmNEXT with a presentation on self-managed teams in the context of BPM, not a demo. Contrasting organizational styles of “early structured” (aka “structured”) versus “late structured” (aka unstructured), with respective characteristics of centralized versus decentralized, and machine-style versus garden-style. Concepts of sociocracy (on which holocracy is based): a formal method for running self-managed teams that are structured around social relationships, aka dynamic governance. Extremely agile, allows ideas to boil up from the bottom. Replaces voting with consensus, where there is open discussion of options and everyone must consent that it is acceptable; objections must require a better proposal. Defining principles: consent governs policy decision making; organizing in circles; double-linking; and elections by consent. Self-managed organizations are inherently agile since good decisions are made where needed and everyone agrees. May be implications on DMN as to how decisions are modeled and captured.

Finishing bpmNEXT with a presentation on self-managed teams in the context of BPM, not a demo. Contrasting organizational styles of “early structured” (aka “structured”) versus “late structured” (aka unstructured), with respective characteristics of centralized versus decentralized, and machine-style versus garden-style. Concepts of sociocracy (on which holocracy is based): a formal method for running self-managed teams that are structured around social relationships, aka dynamic governance. Extremely agile, allows ideas to boil up from the bottom. Replaces voting with consensus, where there is open discussion of options and everyone must consent that it is acceptable; objections must require a better proposal. Defining principles: consent governs policy decision making; organizing in circles; double-linking; and elections by consent. Self-managed organizations are inherently agile since good decisions are made where needed and everyone agrees. May be implications on DMN as to how decisions are modeled and captured.  BPMN and CMMN can cover some of the domains of predictability; we saw other demos this week using other model types that extend further into unpredictable work, such as a process timeline view. Outstanding issues of whether BPMN should be extended to handle less predictable work, or if CMMN can handle this. Keith ended with the observation that this was the year of DMN at bpmNEXT, and issued a call to action for an open-source implementation of DMN execution with conformance suite; likely more possible than for BPMN since it is more constrained. A lot of great discussion ensued, and Keith will be spearheading a WfMC committee to look at this.

BPMN and CMMN can cover some of the domains of predictability; we saw other demos this week using other model types that extend further into unpredictable work, such as a process timeline view. Outstanding issues of whether BPMN should be extended to handle less predictable work, or if CMMN can handle this. Keith ended with the observation that this was the year of DMN at bpmNEXT, and issued a call to action for an open-source implementation of DMN execution with conformance suite; likely more possible than for BPMN since it is more constrained. A lot of great discussion ensued, and Keith will be spearheading a WfMC committee to look at this.