Robert Shapiro spoke on a webinar today about BPMN 2.0, including some of the history of how BPMN got to this point, changes and new features from the previous version and the challenges that those may create, the need for portability and conformance, and an update on XPDL 2.2. The webinar was hosted by the Workflow Management Coalition, where Shapiro chairs the conformance working group.

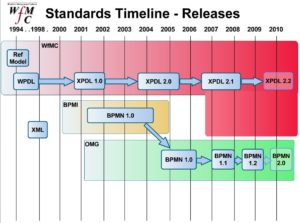

He started out with how WPDL started as an interchange format in the mid-90’s, then became XPDL 1.0 around 2001, around the time that the BPMN 1.0 standard was being kicked off. For those of you not up on your standards, XPDL is an interchange format (i.e., the file format) and BPMN prior to version 2.0 is a notation format (i.e., the visual representation); since BPMN didn’t include an interchange format, XPDL was updated to provide serialization of all BPMN elements.

With BPMN 2.0, serialization is being added to the BPMN standard, as well as many other new components including formalization of execution semantics and the definition of choreography model. In particular, there are significant changes to conformance, swimlanes and pools, data objects, subprocesses, and events; Shapiro walked through each of these in detail. I like some of the changes to events, such as the distinction between boundary and regular intermediate events, as well as the concept of interrupting and non-interrupting events. This makes for a more complex set of events, but much more representative.

Bruce Silver, who has been involved in the development of BPMN 2.0, wrote recently on what he thinks is missing from BPMN 2.0; definitely worth a read for some of what might be coming up in future versions (if Bruce has his way).

One key thing that is emerging, both as part of the standard and in practice, is portability conformance: one of the main reasons for these standards is to be able to move process models from one modeling tool to another without loss of information. This led to a discussion about BPEL, and how BPMN is not just for BPEL, or even just for executable processes. BPEL doesn’t fully support BPMN: there are things that you can model in BPMN that will be lost if you serialize to BPEL, since BPEL is intended as a web service orchestration language. For business analysts modeling processes – especially non-executable processes – a more complete serialization is critical.

In case you’re wondering about BPDM, which was originally intended to be the serialization format for BPMN, it appears to have become too much of an academic exercise and not enough about solving the practical serialization problem at hand. Even as serialization is built into BPMN 2.0 and beyond, XPDL will likely remain a key interchange format because of the existing base of XPDL support by a number of BPM and BPA vendors. Nonetheless, XPDL will need to work at remaining relevant to the BPM market in the world of BPEL and BPMN, although it is likely to remain as a supported standard for years to come even if the BPMN 2.0 serialization standard is picked up by a majority of the vendors.

The webinar has about 60 attendees on it, including the imaginatively named “asdf” (check the left side of your keyboard) and several acquaintances from the BPM standards and vendor communities. The registration page for the webinar is here, and I imagine that that will eventually link to the replay of the webinar. The slides will also be available on the WfMC site.

If you want to read more about BPMN 2.0, don’t go searching on the OMG site: for some reason, they don’t want to share draft versions of the specification except to paid OMG members. Here’s a direct link to the 0.9 draft version from November 2008, but I also recommend tracking Bruce Silver’s blog for insightful commentary on BPMN.

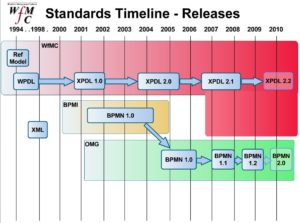

It’s impossible for me to pass up a standards discussion (how sad is that?), so I switched from the business analysis stream to the SOA stream for Heather Kreger’s discussion of SOA standards at an architectural level. OASIS, the Open Group and OMG got together to talk about some of the overlapping standards impacting this: they branded the process as “SOA harmonization” and even wrote a paper about it, Navigating the SOA Open Standards Landscape Around Architecture (direct PDF link).

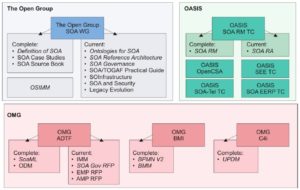

It’s impossible for me to pass up a standards discussion (how sad is that?), so I switched from the business analysis stream to the SOA stream for Heather Kreger’s discussion of SOA standards at an architectural level. OASIS, the Open Group and OMG got together to talk about some of the overlapping standards impacting this: they branded the process as “SOA harmonization” and even wrote a paper about it, Navigating the SOA Open Standards Landscape Around Architecture (direct PDF link). They created a continuum of reference architectures, from the most abstract conceptual SOA reference architectures through generic reference architectures to SOA solution architectures.

They created a continuum of reference architectures, from the most abstract conceptual SOA reference architectures through generic reference architectures to SOA solution architectures.