This post has been a long time coming: I missed talking to Agent Logic at the Gartner BPM event in Orlando in September since I didn’t stick around for the CEP part of the week, they persisted and we had both an intro phone call and a longer demo session in the weeks following. Then I had a crazy period of travel, came home to a backlog of client work and a major laptop upgrade, and seemed to lose my blogging mojo for a month.

If you’re not yet familiar with the relatively new field of CEP (complex event processing), there are many references online, including a recent ebizQ white paper based on their event processing survey which determined that a majority of the survey respondents believe that event-driven architecture comprises all three of the following:

- Real-time event notification – A business event occurs and those individuals or systems who are interested in that event are notified, and potentially act on the event.

- Event stream processing – Many instances of an event occur, such as a stock trade, and a process filters the event stream and notifies individuals or systems only about the occurrences of interest, such as a stock price reaching a certain level.

- Complex event processing – Different types of events, from unrelated transactions, correlated together to identify opportunities, trends, anomalies or threats.

And although the survey shows that the CEP market is dominated by IBM, BEA and TIBCO, there are a number of other significant smaller players, including Agent Logic.

In my discussions with Agent Logic, I had the chance to speak with Mike Appelbaum (CEO), Chris Bradley (EVP of Marketing) and Chris Carlson (Director of Product Management). My initial interest was to gain a better understanding of how BPM and CEP come together as well as how their product worked; I was more than a bit amused when they referred to BPM as an “event generator”. I was someone mollified when they also pointed out that business rules engines are event generators: both types of systems (and many others) generate thousands of events to their history logs as they operate, most of which are of no importance whatsoever; CEP helps to find the few unique combinations of events from multiple data feeds that are actually meaningful to the business, such as detecting credit card fraud based on geographic data, spending patterns, and historical account information.

Agent Logic has been around since 1999, and employs about 50 people. Although they initially targeted defence and intelligence industries, they’re now working with financial services and manufacturing as well. Their focus is on providing an end-user-driven CEP tool for non-technical users to write rules, rather than developers — something that distinguishes them from the big three players in the market. After taking a look at the product, I think that they got their definition of “non-technical user” from the same place as the BPM vendors: the prime target audience for their product would be a technically-minded business analyst. This definitely pushes down the control and enforcement of policies and procedures closer to the business user.

They also seem to be more focused on allowing people to respond to events in real-time (rather than, for example, spawning automated processes to react to events, although the product is certainly capable of that). As with other CEP tools, they allow multiple data feeds to be combined and analyzed, and rules set for alerts and actions to fire based on specific business events corresponding to combinations of events in the data feeds.

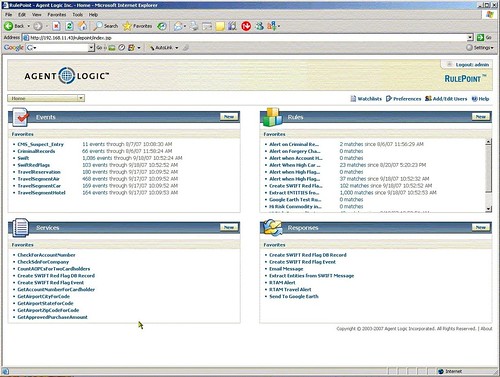

Agent Logic has two separate user environments (both browser-based): RulePoint, where the rules are built that will trigger alerts, and RTAM, where the alerts are monitored.

RulePoint is structured to allow more technical users work together with less technical users. Not only can users share rules, but a more technical user can create “topics”, which are aggregated, filtered data sources, then expose these to the less technical to be used as input for their rules. Rules can be further combined to create higher-level rules.

RulePoint is structured to allow more technical users work together with less technical users. Not only can users share rules, but a more technical user can create “topics”, which are aggregated, filtered data sources, then expose these to the less technical to be used as input for their rules. Rules can be further combined to create higher-level rules.

RulePoint has three modes for creating rules: templates, wizards and advanced. In all cases, you’re applying conditions to a data source (topic) and creating a response, but they vary widely in terms of ease of use and flexibility.

- Templates can be used by non-technical users, who can only set parameter values for controlling filtering and responses, and save their newly-created rule for immediate use.

- The wizard creation tool allows for much more complex conditions and responses to be created. As I mentioned previously, this is not really end-user friendly — more like business analyst friendly — but not bad.

- The advanced creation mode allows you to write DRQL (detect and response query language) directly, for example, ‘when 1 “Stock Quote” s with s.symbol = “MSFT” and s.price > 90 then “Instant Message” with to=”[email protected]”,body=’MSFT is at ${s.price}”‘. Not for everyone, but the interesting thing is that by using template variables within the DRQL statements, you can converted rules created in advanced mode into templates for use by non-technical users: another example of how different levels of users can work together.

Watchlists are lists that can be used as parameter sets, such as a list of approved airlines for rules related to travel expenses, which then become drop-down selection lists when used in templates. Watchlists can be dynamically updated by rules, such as adding a company to a list of high-risk companies if a SWIFT message is received that references both that company and a high-risk country.

Watchlists are lists that can be used as parameter sets, such as a list of approved airlines for rules related to travel expenses, which then become drop-down selection lists when used in templates. Watchlists can be dynamically updated by rules, such as adding a company to a list of high-risk companies if a SWIFT message is received that references both that company and a high-risk country.

RulePoint includes a large number of predefined services that can be used as data sources or responders, including SQL, web services and RSS feeds. You can also create your own services. By providing access to web services both as a data source and as a method of responding to an alert, this allows Agent Logic to do things like kick off a new fraud review process in a BPMS when a set of events occur across a range of systems that indicate a potential for fraud.

RulePoint includes a large number of predefined services that can be used as data sources or responders, including SQL, web services and RSS feeds. You can also create your own services. By providing access to web services both as a data source and as a method of responding to an alert, this allows Agent Logic to do things like kick off a new fraud review process in a BPMS when a set of events occur across a range of systems that indicate a potential for fraud.

Lastly, in terms of rule creation, there are both standard and custom responses that can be attached to a rule, ranging from sending an alert to a specific user in RTAM to sending an email message to writing a database record.

Although most of the power of Agent Logic shows up in RulePoint, we spent a bit of time looking at RTAM, the browser-based real-time alert manager. Some Agent Logic customers don’t use RTAM at all, or only for high-priority alerts, preferring to use RulePoint to send responses to other systems. However, compared to a typical BAM environment, RTAM provides pretty rich functionality: it can link to underlying data sources, for example, by linking to an external web site with criminal record data on receiving an alert that a job candidate has a record, and allows for mashups with external services such as Google maps.

It’s also more of an alert management system rather than just monitoring: you can filter alerts by the various rules that trigger them, and perform other actions such as acknowledging the alert or forwarding it to another user.

It’s also more of an alert management system rather than just monitoring: you can filter alerts by the various rules that trigger them, and perform other actions such as acknowledging the alert or forwarding it to another user.

Admittedly, I haven’t seen a lot of other CEP products to this depth to provide any fair comparison, but there were a couple of things that I really liked about Agent Logic. First of all, RulePoint provides a high degree of functionality with three different levels of interfaces for three different skill levels, allowing more technical users to create aggregated, easier-to-use data sources and services for less technical users to include in their rules. Rule creation ranges from dead simple (but inflexible) with templates to roll-your-own in advanced mode.

Secondly, the separation of RulePoint and RTAM allows the use of any BI/BAM tool instead of RTAM, or just feeding the alerts out as RSS feeds or to a portal such as Google Gadgets or Pageflakes. I saw a case study of how Bank of America is using RSS for company-wide alerts at the Enterprise 2.0 conference earlier this year, and see a natural fit between CEP and this sort of RSS usage.

Update: Agent Logic contacted me and requested that I remove a few of the screenshots that they don’t want published. Given that I always ask vendors during a demo if there is anything that I can’t blog about, I’m not sure how that misunderstanding occurred, but I’ve complied with their request.