Neil Ward-Dutton gave a webinar this morning about delivering on the promise of BPM: how we have to get past the vision of BPM as model-driven development for rapid application delivery, and focus on the bigger picture of enabling continuous process improvement through technology use. There are a lot of challenges when you move past departmental solutions and start rolling out BPM company-wide: you need to scale communication, collaboration, change management and governance to match your deployment.

He started with the key drivers for large-scale business process improvement: globalization, such that your customers, partners and competition can come from anywhere; transparency in terms of regulations and open competition; and smart, connected markets where you need to engage your customers in the online world. Both business and IT recognize the need for flexibility, innovation, value and differentiation in order to exist in this changing world. BPM is important to this because it’s not just about model-driven development, and about building applications faster: it’s about creating a better way to manage your business and its key processes.

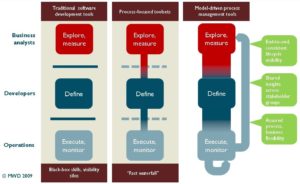

It’s this combination of management philosophy, efficiency optimization methods and technology that makes it powerful, and allows you to improve operational efficiency, support innovation, and enjoy flexibility in your business model. Process also creates a common language for business and IT to collaborate, and we’re seeing that reflected in the BPM tools that allow business and IT to each have their own perspective on a shared process model. He made an excellent point that just using a process-focused toolset doesn’t give you a true agile process improvement environment: it just gives you a fast waterfall method. You really need to have model-driven process management in order to have that unbroken cycle of exploring/measuring, defining and executing/monitoring your processes.

I loved the phrase on one of his slides on scaling communication and collaboration – “You can’t scale a BPM initiative if you rely on a small ‘priesthood’ to carry out Six Sigma ceremonies” – with his point being that you have to have the culture of process innovation exist throughout your organization. He also pointed out that you have to be able to manage process model assets effectively in order to be able to locate and reuse models as required: something that requires a proper model repository, not a collection of files out on a shared network drive somewhere.

I loved the phrase on one of his slides on scaling communication and collaboration – “You can’t scale a BPM initiative if you rely on a small ‘priesthood’ to carry out Six Sigma ceremonies” – with his point being that you have to have the culture of process innovation exist throughout your organization. He also pointed out that you have to be able to manage process model assets effectively in order to be able to locate and reuse models as required: something that requires a proper model repository, not a collection of files out on a shared network drive somewhere.

We also heard from Brandon Baxter of Lombardi (who sponsored the webinar) about how they address model-driven development in Teamworks, where all authors work in a single shared environment. In addition to providing tools for both business analysts and IT, they provide the ability to have “playbacks” to show the current state of the process to people who aren’t involved directly in the process modeling. He described a situation where they took a huge requirements document and a tsunami of Visio diagrams from a client, did an initial version in Teamworks, then did a playback to the group that provided the requirements: not surprisingly, the process wasn’t at all what they wanted, even though it was what they asked for. I see this all the time with clients, and push for an early prototype/playback as soon as possible in order to validate the requirements and process flows, but sometimes it’s hard for them to believe that all those requirements that they spent time gathering, writing and approving aren’t really the best way to go about developing their processes.

He also spent some time talking about their asset repository; although I haven’t done an in-depth review of Teamworks for a while, I recall that it’s very robust. Maybe I’m so steeped in the concepts of the value of content management that management of process assets seems like a no-brainer to me: any business content (including process models) that has any value should be in some sort of controlled environment that allows it to be secured (if necessary), versioned, and easily found and reused.

There was a good Q&A at the end, including some on executable versus non-executable models, and the value of importing non-executable models into a BPMS. Interchange formats for exchanging models between pure modeling tools and executable BPM tools are necessary, but there a whole lot of the process that will likely not be imported since it’s not executable, as well as a lot of enrichment that needs to be done to the processes once they’re in the BPMS. Due to both of these factors, round-tripping often is not possible between the modeling and execution environments. I had a conversation with a customer on exactly this issue yesterday; to those who haven’t worked with these systems, it’s hard to grasp why you can’t just round-trip the models (answer: the modeling environment may not support the execution enrichment) or why you would ever need to change a model in the execution environment rather than do it in the modeling environment and re-import (answer: agility).

The webinar was at a fairly basic level, but provided some great information for those who are still new to BPM. In a survey taken during the webinar, it looked as if a majority of the people were either gathering information or just getting started. Baxter’s part was mostly unique to Lombardi’s product, although many of the other BPM products out there have a similar set of features.

I accessed the live version of the webinar here, but I’m not sure if the replay will be there as well.

Sandy, this is a very nice overview – thanks very much for the write-up! I’m glad it all made sense and flowed.

Thanks for joining the webinar and the write-up. I think we are long overdue on a meeting to catch you up on the latest in Teamworks.

Besides raising our competitive juices a bit, your statement at the end of the write-up brought me up short – “… many other BPM products our there have similar features”. That was the point of the webinar – most don’t. Having a unified IDE or repository is not enough. Yet most BPM solutions still struggle to meet that basic criteria here in 2009. Delivering on the promise of BPM means solving the hard parts of model-driven development/architecture – managing assets, version management for the masses (not the Java priests). Many (most from our perspective) BPM solutions have not even remotely addressed these problems. We have – and it takes a lot of work to do it. Don’t take our word for it – dig deeper beyond the “model-driven” claims.

Hi Brandon, I knew that I could get you to comment if I “insulted” you by comparing you to other vendors 🙂 We are long overdue for a briefing.

> sometimes it’s hard for them to believe that all those requirements that they spent time gathering, writing and approving aren’t really the best way to go about developing their processes

Good point. And sometimes it’s a threat for a BPM project.

We’ve been in a similar weird situation: the project didn’t go further after successfull PoC phase because (I guess) the customer couldn’t admit that the business issue they worked on heavily may be resolved in such elegant and efficient way. It could raise an unpleasant question – what two teams (“quality” and IT) are good for? – so they finally buried the project.

“BPM: handle with care!” – be good yet not too good as a BPM consultant 🙂

Anatoly, I think that you’re right – many people tied to the old methods of doing things are threatened by newer methods that cut out a lot of the work that they are doing. Job security!

This all makes sense, and this is a strong endorsement of the “model preserving strategy”:

http://kswenson.wordpress.com/2009/02/09/model-strategy-preserving-vs-transforming/

I am seeing more and more products that talk about a consistent model through all phases of the life cycle. Yet, the press (sorry over generalizing here) seems to focus on automatic transformation to executable form. Not sure I like the term “Model Driven Process Management” because is it so close to “Model Driven Architecture”. The term “MDA” is defined by the OMG as involving transformation, but that is really just “Fast Waterfall” (I like that term). Neil is absolutely right: for real continuous improvement of processes, you need more than automatic generation.